Is Bluesky gonna be a nazi bar?

What happens when a small band of crypto enthusiasts, with innovative ideas around federation and moderation for a distributed social media protocol, find 10,000 left wing shitposters playing in their demo instance?

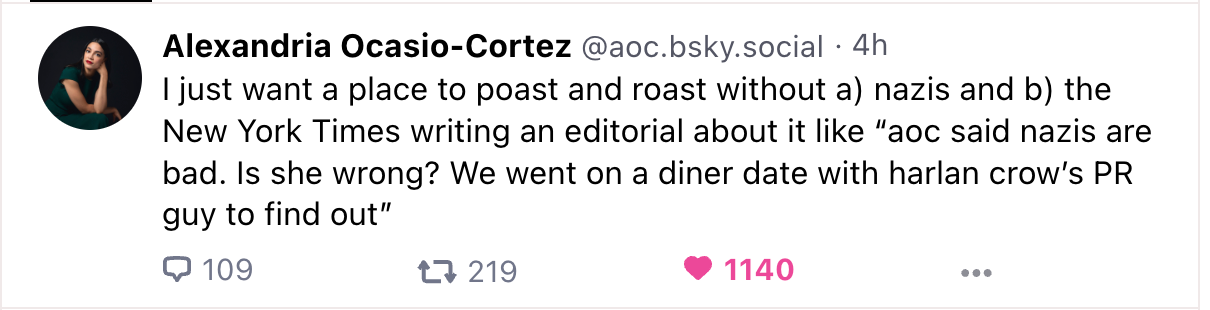

Last week, Bluesky Social, the new and shiny invite-only Twitter clone, had a moment. Some of the biggest poasters from Twitter, led by core Black Twitter members and followed by a massive number of queer, POC, trans, and left wing posters – including AOC – joined. AOC reflected on the experience with this post:

Hmmm, not so fast I think.

There is a lot of work to be done before this becomes a safe place on the internet – that is, mostly free of racism and transphobia – and despite all the fun being had, and significant good intentions, I'm seeing more red flags than green ones.

The bans

During this larger moment were two pivotal submoments: the banning of user who was being all nazi, and the bullying off the platform of noted "just asking questions" transphobe Matthew Yglesias.

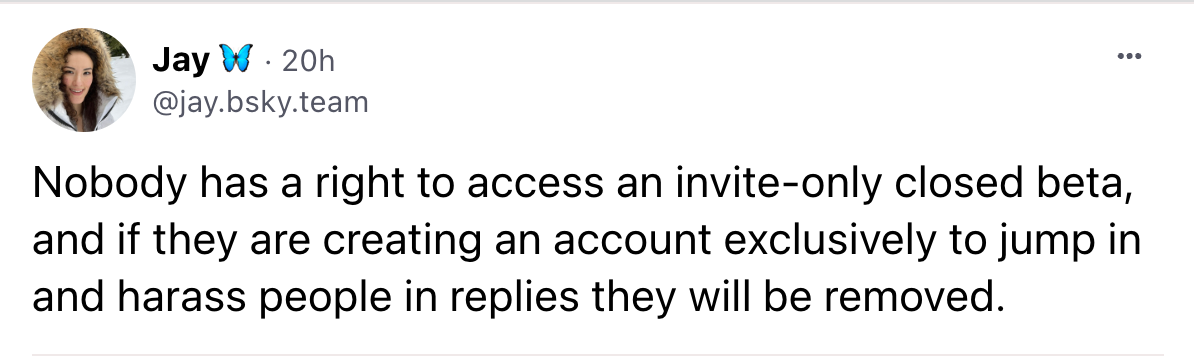

The cancelling of the nazi was of particular note. Jay Gruber, Bluesky CEO posted this explanation:

This is good, but not great. I would like the response to have been "No nazis" but the actual response was "don't cause trouble during closed beta". Which makes you wonder, what happens after the closed beta? Are they then allowed create an account to exclusively harass people? What if they only harass people part of the time?

Similarly, Matthew Yglesias arrived on the platform. He is a well-known and notorious faux-liberal transphobe of the "just asking questions" sort. Unfortunately for him, the new influx of users included a large contingent described as "Trans and Queer Shitposters". They were not amused. One user got banned for threatening him with a hammer:

it is official: I am the first person to get banned from bluesky pic.twitter.com/b91CBXFXJC

— Ms. Hannah :) 🦖🦕 (@Hannah_bmbmbm) April 28, 2023

Matt is still around, however, though he stepped away for a bit.

What are Bluesky's values?

To have a guiding light for how to deal with these situation, one needs values. Or at least rules, which often are a manifestation of values.

Bluesky (that is, the instance run by the company) seems to not have a set of rules or values. (In fairness, CEO Jay Gruber says she is writing some). However, if we were to infer rules from the moderation actions, we would come up with this:

- transphobes ✅

- bullying transphobes ❌

- nazis ✅

- causing trouble during a closed beta ❌

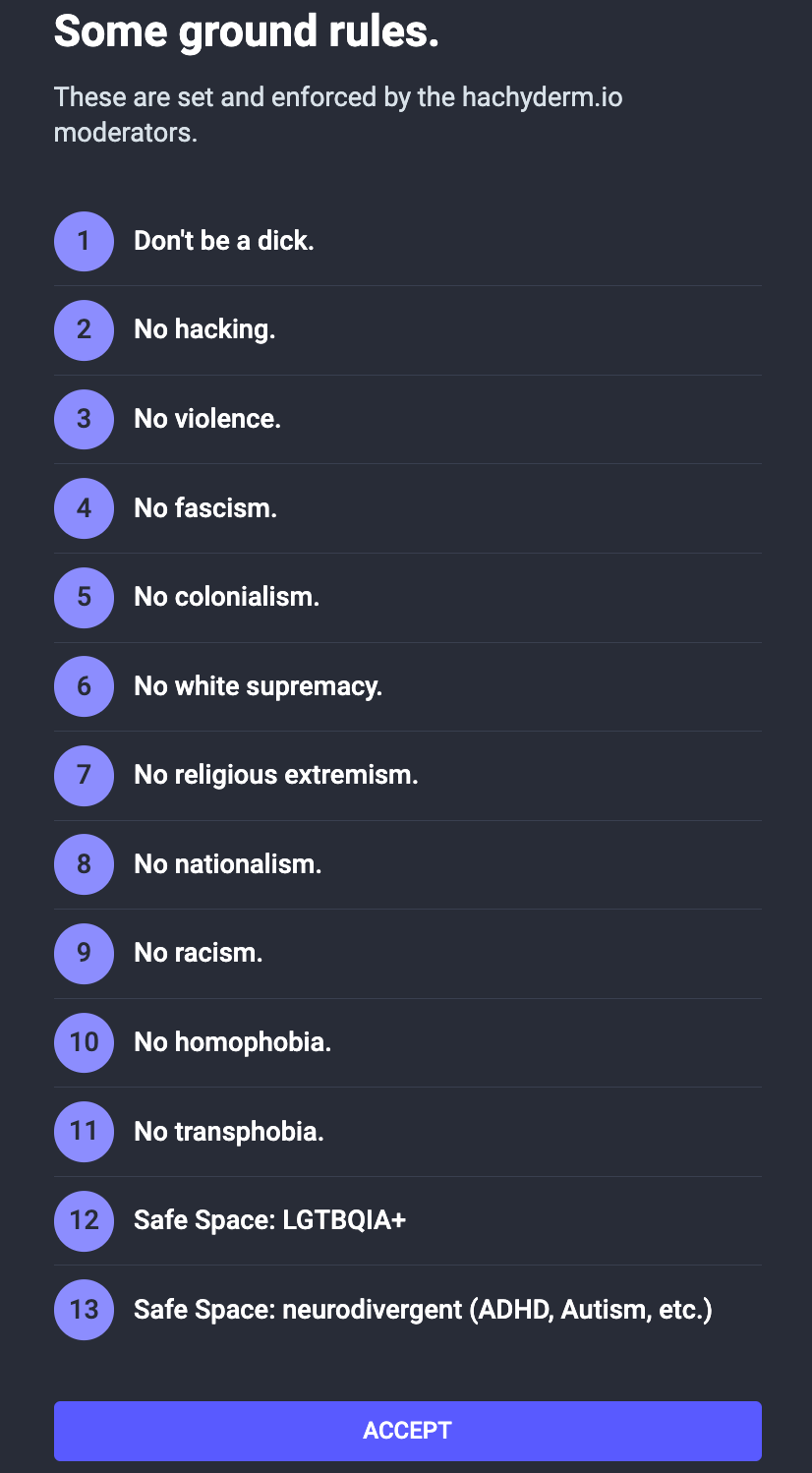

I'd like to compare this to Hachyderm, a node of the Mastodon federated service. They have an explicit set of values, a code of conduct, and detailed explainers of the rules and values, including how they moderate. Here's what you see when you sign up:

But wait there's more

An important caveat here is that this is all incredibly new. While new social networks are supposed to have this shit done in advance, I don't think Bluesky planned for a ton of big shot poasters to suddenly arrive on the single demo instance that they run. The discussion is underway, and the CEO has said she is working on this stuff literally as I write this.

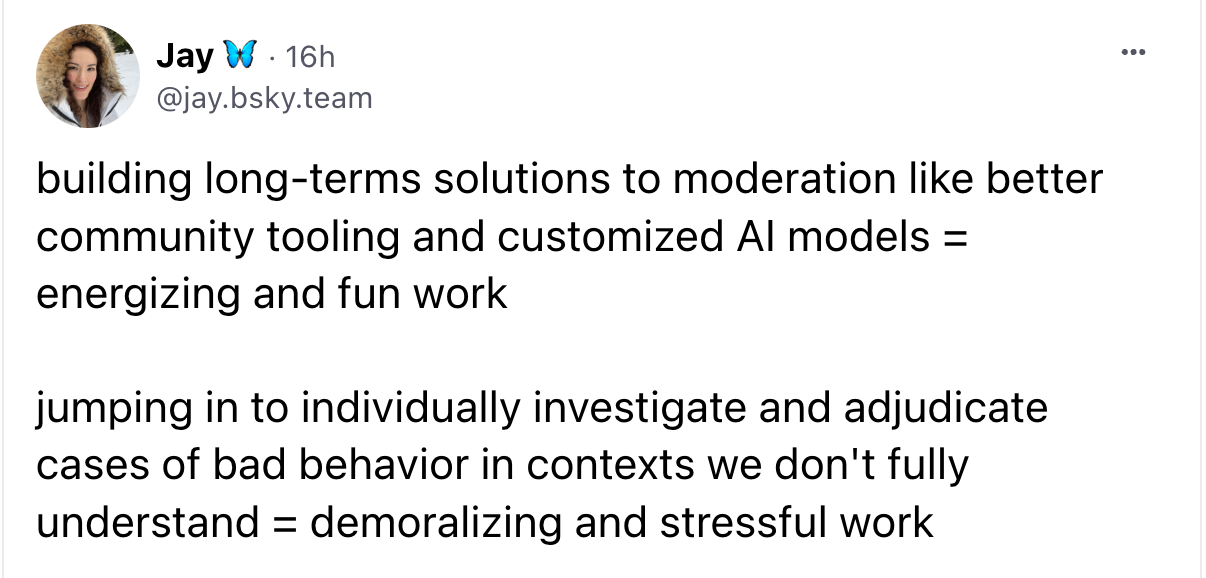

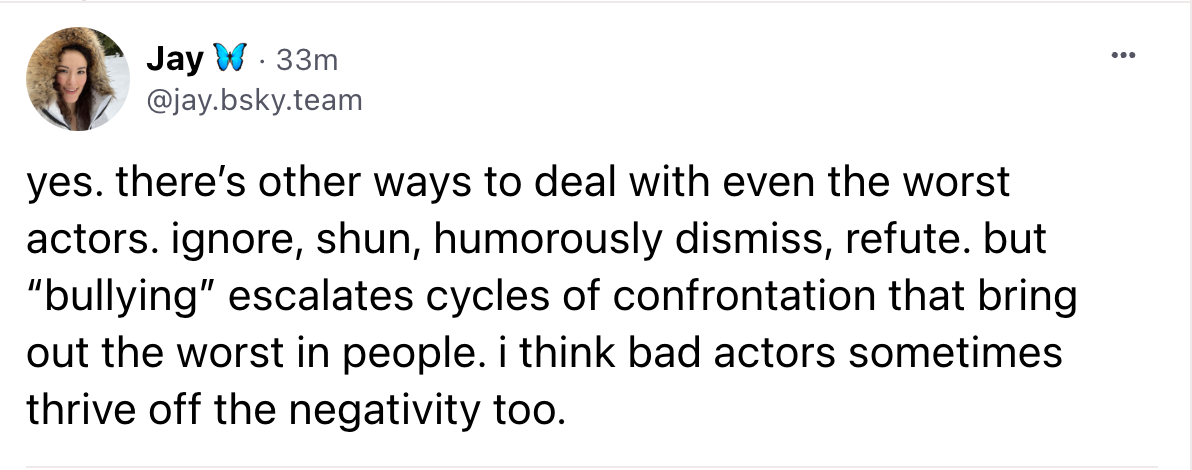

I think Jay is well intentioned. But she has hinted on how she thinks about it:

For context, this skeet was discussing a team member who was getting burnt out doing individual moderation, which is expected.

But at the same time I do read a naivety in the difficult task of moderation. Moderation is hard, grueling work that is essential for communities. It can be aided by AI and algorithms, but they cannot do the work without significant tedious work by humans, preferably those with significant experience of protecting marginalized community members. And that work needs to be guided by clear rules and values.

That naivety is also evident from what Bluesky has written in the past.

In a post entitled "Composable Moderation", they discuss how people can bring their own moderation algorithms. Techdirt liked it, as they do not believe any one service or company can have be the Town square. Alas, whether it wanted it or not, Bluesky has got itself a town square now, and how the humans behind Bluesky handle moderation of users and content will decide whether the town square is going to have a nazi bar [1] in it or not.

And the people who just joined, primarily led by Black Twitter, with large queer, trans, and POC contingents, do not like nazi bars.

What to do?

I think the people running Bluesky are well meaning but do not have the experience of harassment that lots of the new users, especially the trans users, have. The people who for example are advocating for bullying Matthew Ygesias off the platform are very familiar with what happens when you don't. They have just spent a year on the receiving end of what happens when you let racists and transphobes on a platform and tell users to just block and ignore them. If Bluesky does this, essentially platforming these people, I believe they will lose the good will of the users they currently have.

Instead, Bluesky needs to ask what's important: the safety of its users or letting problematic speech stand as long as it's not causing too much trouble. They need to write down the values they intend to moderate with so that users can decide if this is a place they truly want to get settled.

In my opinion, they need to ban transphobia, racism, etc, and also disallow people with a history of transphobia or other discrimination from joining this Bluesky instance – Bluesky is federated after all so those arseholes can go make their own racist transphobic instance instead.

They also need to hire experienced moderators – 8 engineers and one community manager isn't going to cut it.

Finally, I would reach out to the fine folks at Hachyderm who have done a stellar job of exactly this for the last year.

Final words

I will say I'm very much enjoying my time on Bluesky, and am rooting for the folks behind it. This post is written with love at the beautiful community that I'm seeing being built, one that I would love to see preserved. Good luck!

(Note, literally as I write this, discussions on the topic are happening on Bluesky, such as here).

I'm on Bluesky, Twitter, and Hachyderm.

[1] The nazi bar, if you're unfamilar with the metaphor.

I was at a shitty crustpunk bar once getting an after-work beer. One of those shitholes where the bartenders clearly hate you. So the bartender and I were ignoring one another when someone sits next to me and he immediately says, "no. get out."

— Michael B. Tager (@IamRageSparkle) July 8, 2020